As expected and in typical bureaucratic doublespeak, the Florida Department of Education used the

report by the sole bidders for the allegedly independent review of the 2015 FSA test to magically deem that Florida's test scores could be used in the aggregate for school grades and teacher evaluations. Commissioner Pam Stewart who has presided over much chaos and bears much responsibility for the many problems and lack of accuracy of her own statements in the Common Core standard and testing fiasco, called this statement by Alpine "welcome news." However, even the authors of the study, who have multiple incestuous relationships to the development of Common Core standards and the testing industry, admit numerous problems with their work blaming it all on a fast timeline or other factors beyond their control. Here are some of the most important issues:

"My name is Doug McRae, a retired testing specialist from Monterey.

The big question for Smarter Balanced test results is not the delay in release of the scores, or the relationships to old STAR data on the CDE website, but rather the quality of the Smarter Balanced scores now being provided to local districts and schools. These scores should be valid reliable and fair, as required by California statute as well as professional standards for large scale K-12 assessments. When I made a Public Records Request to the CDE last winter for documentation of validity reliability and fairness information for Smarter Balanced tests, either in CDE files or obtainable from the Smarter Balanced consortium, the reply letter in January said CDE had no such information in their files. I provided a copy of this interchange to the State Board at your January meeting. There has been no documentation for the validity, reliability, or fairness for Smarter Balanced tests released by Smarter Balanced, UCLA, or CDE since January, as far as I know.

Statewide test results should not be released in the absence of documented validity reliability and fairness of scores. Individual student reports should not be shared with parents or students before the technical quality of the scores is documented. But, the real longer lasting damage will be done if substandard information is placed in student cumulative academic records to follow students for their remaining years in school, to do damage for placement and instructional decisions and opportunities to learn, for years to come. To allow this to happen would be immoral, unethical, unprofessional, and to say the least, totally irresponsible. I would urge the State Board to take action today to prevent or (at the very least) to discourage local districts from placing 2015 Smarter Balanced scores in student permanent records until validity reliability and fairness characteristics are documented and made available to the public." [Emphasis added]

-

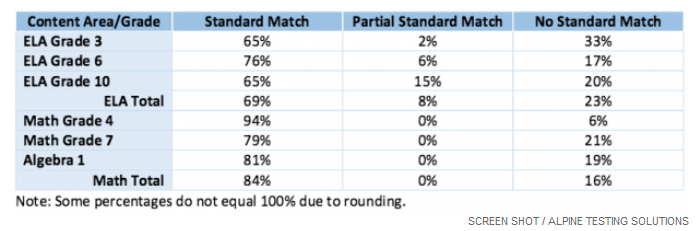

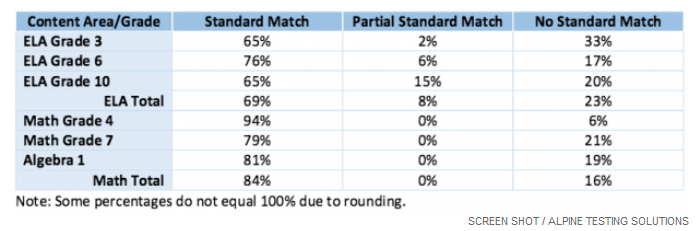

Misalignment Between Florida & Utah Standards &Test Questions - In addition, the FSA questions are aligned to Utah standards that were not necessarily taught in Florida. The executive summary says "the items were originally written to measure the Utah standards rather than the Florida standards. While alignment to Florida standards was confirmed for the majority of items reviewed via the item review study, many were not confirmed, usually because these items focused on slightly different content within the same anchor standards." (Emphasis added). Alignment studies were done for the very high stakes 10th grade ELA, Algebra I, and 3rd grade reading tests that affect graduation and promotion and the level of misalignment is alarming, showing 20% for the 10th grade ELA test, 19% for Algebra I, and a whopping 33% mismatch for the third grade test:

It is appalling that Florida students, teachers and districts are going to be held to the results of these tests when there is such a high degree of mismatch. The report's recommendation to eliminate the Utah questions is on the one hand vital for validity and fairness of results that have such important implications for Florida students, and on the other hand problematic given the plan to rent Utah questions for two more years at the cost of another $10.8 million. In addition, significantly changing the test questions over several years will make the test unstable as far as year to year consistency and usefulness to measure academic growth, supposed hallmarks of these statewide tests that cost hundreds of millions of dollars.

-

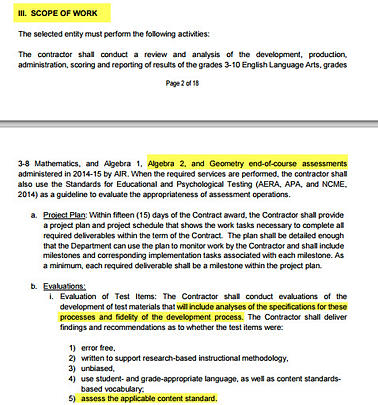

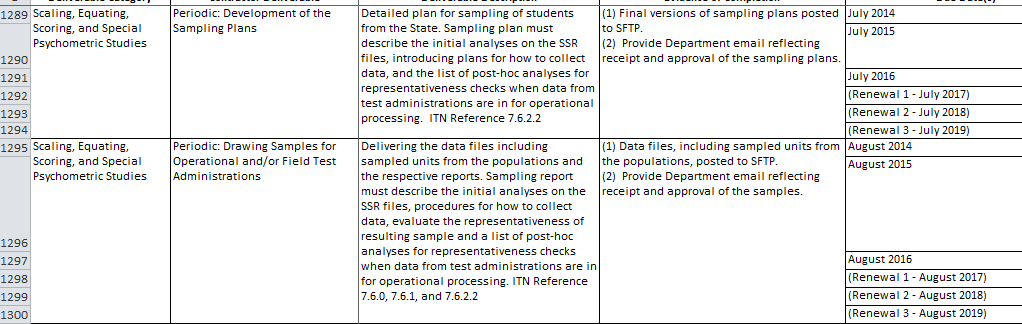

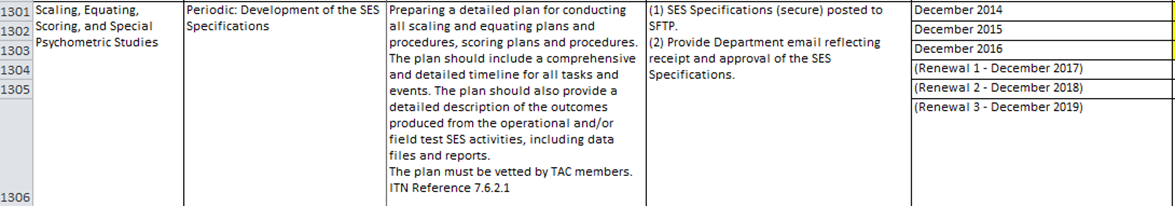

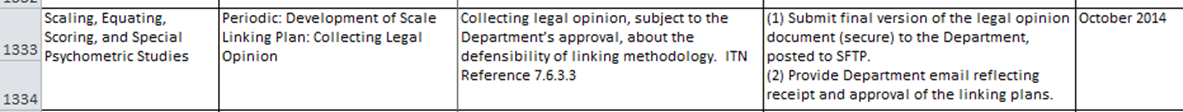

These validity studies were supposed to be done prior to the administration of the FSA and Alpine sidestepped these issues. Note how much field testing (8/2014), the plan for developing the scoring scales (12/2014) and even "collecting the legal opinion...about the defensibility of linking methodology (10/2014) was supposed to be completed in mid to late 2014 before the test was given in March of 2015 (See more details HERE):

Alpine on the one hand tries to say that these studies were done well:

"Conclusion #5 Evaluation of Scaling, Equating, and Scoring

Following a review of the scaling, equating, and scoring procedures and methods for the FSA, and based on the evidence available at the time of this evaluation, the policies, procedures, and methods are generally consistent with expected practices as described in the Test Standards and other key sources that define best practices in the testing industry. Specifically, the measurement model used or planned to be used, as well as the rationale for the models was considered to be appropriate, as are the equating and scaling activities associated with the

FSA."

However, the exceptions that seem to admit that at least some of these studies were not completed seem to render the initial conclusion almost meaningless:

"There are some notable exceptions to the breadth of our conclusion for this study. Specifically, evidence was not available at the time of this study to be able to evaluate evidence of criterion, construct, and consequential validity. These are areas where more comprehensive studies have yet to be completed. Classification accuracy and consistency were not available as part of this review because achievement standards have not yet been set for the FSA."

-

Vague instruction and technical glitches caused widespread stress and immeasurable damage for students A Miami Herald article quoted some of the many heart wrenching frustrations of students and test administrators alike with quotes such as the following:

"With SO many error messages and issues, frustration and stress levels were through the roof!"

"The report notes that in some schools, vague instructions meant entire grade levels had their math scores thrown out because students were allowed to use calculators when they shouldn't have, or used calculators that weren't allowed."

"One district reported that new drag-and-drop features didn't work and another said students weren't able to review their work."

"Districts wrote there was 'great confusion' because information was often given at the 'last minute.' One district wrote they were 'essentially flying blind' since they didn't even know what computer screens would look like on testing day."

"Survey results show the most frequent bug that schools had to contend with were unexpected computer crashes. Over and over again, students lost work in cyberspace when they were booted off the test. Sometimes their answers were recovered, sometimes they weren't. Though Etters said preliminary data shows all tests responses were captured, the problem knocked confidence in the exams."

-

Accommodations for special education students appear to have purposely eliminated by FLDOE - The Miami Herald article made it appear that accommodations for students with disabilities were not going to be available due to technical issues with the test:

Mere weeks before the tests, school districts were told that text-to-speech accommodations for children with special needs wouldn't be available and districts scrambled to find adults who could read prompts aloud during testing.

However, the study made it clear that it was a conscious choice by FLDOE not to accommodate these students that will hinder their access to reading passages and unable to demonstrate their abilities. This may well be a violation of the federal Individuals with Disabilities Act:

"

Given the interpretation of "reading" by FLDOE, use of a human reader is not an allowable accommodation to ensure the construct remains intact. Students who have mild-moderate intellectual disabilities and limited reading skills will have limited access to the passages without the use of a human reader. Students with vision or hearing impairments who also have limited ability to read, including reading braille, will have limited access to the passages without the use of a human reader. When required to read independently, these groups of students will not have the ability to demonstrate their understanding of the text beyond the ability to decode and read fluently.

For example, without access to the passage, the students will be unable to demonstrate their ability to draw conclusions, compare texts, or identify the central/main idea." (

p. 44 - Emphasis added)

-

Even the chief psychometrician for Alpine that performed the FSA validity study would not definitively say that the FSA is valid Here is an excerpt from an interview:

Within that report, Wiley said, the reviewers did find enough data to support using the test results at an aggregate level, such as school grades. However, he cautioned, many unknowns about the impacts to individual schools and students exist. That means more study would be appropriate, as the report suggests.

"We need to talk about how the aggregate scores can be used," he said. "It should be done with a lot of caution."

Wiley also shied from the idea of calling the test "valid."

"Validity is not a simple yes-no," he said. (Emphasis added).

Given these many problems and others not listed due to time and space constraints, FSCCC heartily agrees with the following sample of the numerous critical comments about both the study and the testing regime in Florida:

"'It's almost asking people to suspend their personal experience for a moment and just trust us that it went well, when people know for a fact that it didn't,' Andrea Messina, executive director of the

Florida School Boards Association, told the Miami Herald."

Florida Association of District School Superintendents - "While the report is extensive and thorough, many of the results further confirm Florida superintendents' valid concerns regarding the validity of the FSA for use in teacher evaluations and school grade calculations. Superintendents firmly believe that the 2015 FSA results should be used as baseline data only."

Tampa Bay Times Columnist John Romano "This is what has come of the state's zealous adherence to Jeb Bush's educational reforms. Lawmakers have taken potential solutions and rammed them so far down the throats of parents and educators that additional discussion is nearly impossible.

The result is conflict. And confusion. And a generation of students being used as political pawns.

The problem is, our Legislature has destroyed the value of these concepts with nonsensical overkill. They've given standardized tests -- and thus the specific curriculum they are based on -- so much weight in classrooms, budgets and careers that these reforms have swallowed education."

Florida Education Association - "The report noted that the testing season in 2015 was a mess, that the test results shouldn't be used as prominently as they are by the state and the questions from another state don't reflect Florida's standards," Florida Education Association spokesman Mark Pudlow said in a statement. "That's far from a ringing endorsement of the DOE's approach."

For all of these reasons FSCCC calls for the cancellation of the AIR contract and return of local control with districts choosing their own standards, tests, and curriculum as it was prior to 1994, given that federal and statewide interventions have done nothing to improve academic achievement or close gaps and much to harm students and teachers.

Subscribe

Subscribe